Xenu Link Sleuth vs. PBN Lab

I’ve been asked how or why PBN Lab is better than Xenu Link Sleuth so many times now, I thought I’d write a post to help explain the situation.

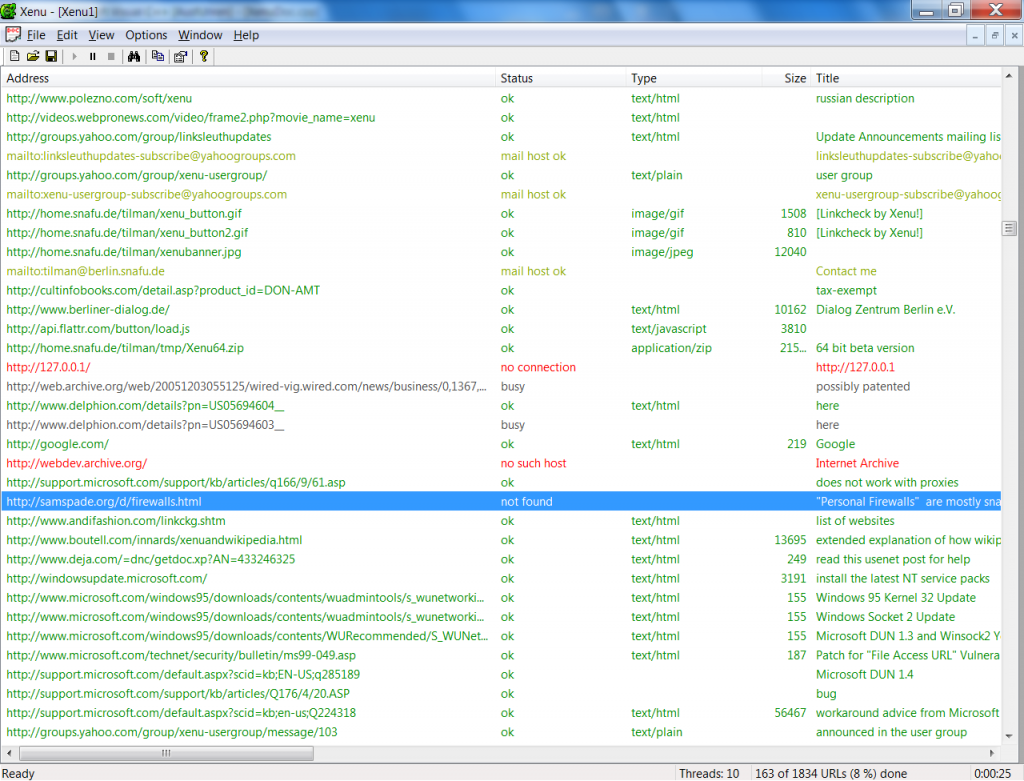

Xenu Link Sleuth is a tool designed to simply find dead links.

It really offers nothing more in terms of crawling for expired domains, because finding dead links is only just the beginning of the process.

All of the important steps that follow the crawl itself are horrible, time-burning, manual processes when you use Xenu.

And this is what makes PBN Lab worth the investment, because it takes none of your time. The hardest part about finding expired domains has been completely automated!

The manual method, using Xenu Link Sleuth:

Job set up time: 10 seconds

Crawl execution time, not including post-processing: 10 to 60 minutes (hands off)

Total job processing time required by you: 10 to 120 minutes, depending on quantity of results

- Enter the URL of one web site. It’ll crawl the site looking at all of the outbound links. When it’s done, it’ll simply report to you the dead links it finds.

- Take that list of dead links, copy and paste it into Excel. Apply a formula to pull the domain names out of the URLs.

- You then need to create a list of unique domain names based on the results from step 2, and then process this list of domains using a bulk domain availability checker (a function available on many domain registrar web sites).

- With the list of domains, you need to filter down to just the domains which are available.

- Look up the domain’s Moz Metrics using Open Site Explorer needs to be performed one domain at a time, or at best, in batches of 5 using the compare metrics function.

- Copy and paste the metric values from Open Site Explorer back into individual columns in your spreadsheet.

- Look up the domain’s Majestic metrics, one domain at a time and copy the results back into your spreadsheet.

- Perform steps 5, 6 and 7 tens, hundreds or thousands of times for each expired domain that you found.

Crawling for expired domains using PBN Lab:

Job set up time: 20 seconds

Crawl execution time, not including post-processing: 10 to 30 minutes (hands off)

Total job processing time required by you: 0 seconds

- Start a new job by generating a seed list of URLs to crawl, using a simple keyword search.

- You can also perform a crawl of a single site or web directory by entering one single URL.

- Alternative methods are available that allow you to manually load a list of seed URLs.

- Let the job run, while you queue up more jobs, or do anything else, as the crawler automatically:

- Crawls at thousands of URLs per minute, establishing dead links as it goes (and predicting expired domains using a complex and proprietary algorithm to find more domains in less time).

- Establishes which domains are available based from the list of dead links it has established.

- Validates each domain’s availability with a domain registrar, or using live WHOIS data

- Fetches the Moz metrics for each and every single domain using Moz’s Linkscape API (the same data used in Open Site Explorer)

- Fetches the Majestic metrics for each domain, whose Moz metrics are great enough to make it worthwile (Majestic data is quite expensive via their API) – including TrustFlow topics, Backlinks, Referring Domains, Referring IPs and more.

- PBN Lab’s crawler reports to your dashboard only the available domains found – including all of their collected metrics in a simple and easy to use dynamic view, where you can quickly and easily filter all of your domains by their metrics, or TLD type. For just the last crawl you ran, or all of your previous crawls combined.

- Assess your domain results in less than a minute, and mark domains you love as favorites. Trash the domains you hate. Ignore the rest.

- Rinse and repeat!